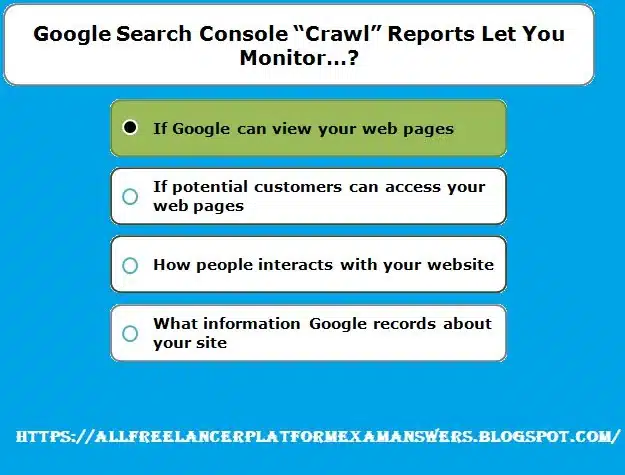

If you own a website, you want to ensure that it is operating at its peak efficiency. Google Search Console is one tool for doing that. This tool provides you with useful information on how your website is doing in search results. Crawl reports are one of Google Search Console’s most crucial tools. You can use these reports to check if your website is correctly crawled and indexed and to track its performance. The following 10 approaches help you keep an eye on your website using Google Search Console crawl reports.

A solid online presence is essential for any company or website owner in the modern digital age. Google Search Console (GSC), a free service offered by Google that aids in monitoring, maintaining, and troubleshooting a website’s appearance in Google search results, is one of the most effective tools at their disposal. You may use GSC to optimize your content and raise your site’s search engine rankings because it provides a plethora of information and insights into how it is performing.

Crawl reports, a crucial feature of Google Search Console, offer insightful information on how Googlebot engages with your website. You can make sure that your website is easily found, correctly indexed, and free of mistakes that can adversely affect user experience and search engine performance by keeping an eye on crawl reports. In this article, we’ll examine the many features of Google Search Console crawl reports and how you can use them to improve the functionality of your website.

1. Understanding Google Search Console Crawl Reports

Crawl Reports: A Comprehensive Overview

Crawl reports are a crucial component of Google Search Console since they offer insightful information about how Googlebot engages with your website. These reports include information on the crawling procedure, including how frequently Googlebot visits your site, which pages it crawls, and any problems or errors that may have occurred. You can improve the user experience and search engine visibility of your website by tracking and analyzing this data.

Googlebot: The Web-Crawling Powerhouse

Understanding Googlebot’s operation is necessary before appreciating the value of crawl reports. Googlebot, the search engine’s web crawler, is in charge of finding new and updated web pages to add to the index. It travels websites by clicking links that take it from one page to another, where it gathers data and assesses the information it finds. Crawling is the term for this action.

A webpage’s content is examined and indexed in Google’s enormous database after Googlebot has crawled it, making it accessible to users through Google search. The crawling procedure is ongoing and occurs at irregular intervals, based on the size and significance of your website as well as how frequently the content is updated.

The Google Search Console’s crawl reports provide you a thorough perspective of this crawling procedure and enable you to keep track of Googlebot’s activity on your website and spot any potential problems that can obstruct indexing or impair search engine performance.

2. Accessing Crawl Reports in Google Search Console

Step-by-Step Guide to Accessing Crawl Reports

To access crawl reports in Google Search Console, follow these simple steps:

- Log in to your Google Search Console account. If you haven’t already set up your account, you’ll need to create one and verify your website ownership.

- Once logged in, select the desired property (website) from the dropdown menu in the top-left corner of the screen.

- In the left-hand sidebar, click on “Crawl” or “Legacy tools and reports” (depending on the version of Google Search Console you’re using).

- In the expanded menu, click on “Crawl Stats” or “Crawl Errors” to access the respective reports.

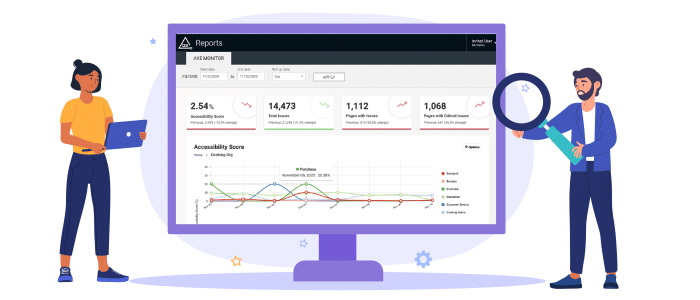

Understanding the Crawl Reports Dashboard

The crawl reports dashboard is divided into several sections, each providing valuable insights into different aspects of the crawling process:

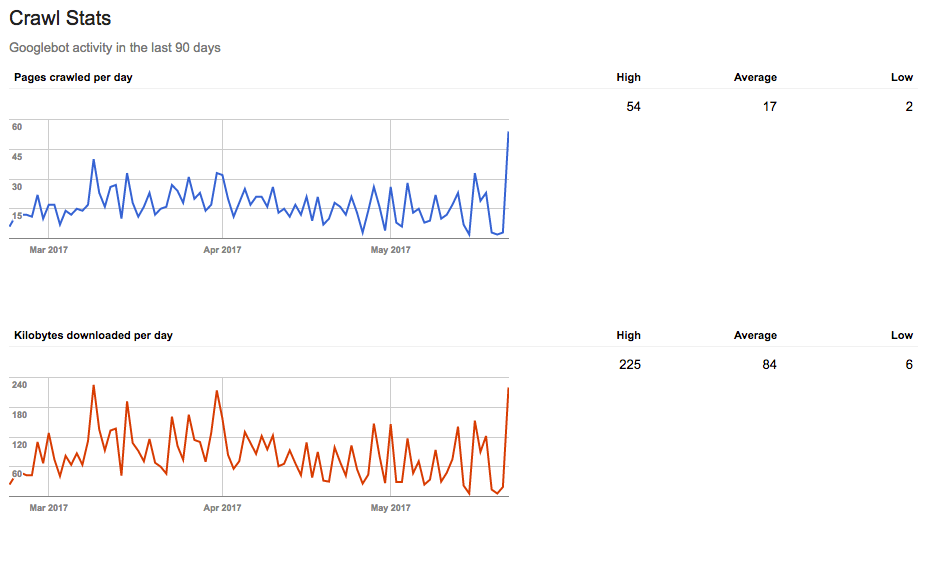

- Crawl Stats: The crawl activity on your website is represented graphically in this section, along with the number of pages crawled daily, the volume of data downloaded daily, and the average download time per page. You may learn more about how Googlebot interacts with your website and potential crawling and indexing problems by analyzing these statistics.

- Crawl Errors: Any crawl issues that Googlebot encountered while visiting your site are listed in this section. Site errors and URL errors are the two basic categories of mistakes. Site difficulties, such as issues with server connectivity or DNS resolution, are problems that affect your entire website. Broken links, 404 errors, and prohibited resources are just a few examples of the types of URL problems that are specific to particular pages.

- Crawl Issues: This section offers a thorough description of particular crawling problems, such as restricted URLs or sites with oddities. Together with the issue’s kind and the time it was discovered, you can examine a list of the pages that were impacted.

- Sitemaps: You can upload and manage XML sitemaps for your website in this section, which will make it easier for Googlebot to find and crawl your website. Also, you can see the status of the sitemaps you’ve uploaded, the number of URLs that have been indexed, and any issues or warnings that have been found.

Knowing the various crawl reports dashboard elements will enable you to more accurately evaluate the state of your website and take the necessary steps to remedy any issues that may be affecting how well it performs in search engine results.

3. Monitoring Website Health with Crawl Reports

Key Metrics in Crawl Reports

Crawl reports in Google Search Console provide several key metrics that can help you monitor your website’s health and performance:

- Pages Crawled per Day: This statistic displays how many pages on your website Googlebot visits each day. A steady crawl rate shows that Googlebot can access and routinely index your content.

- Data Downloaded per Day: This indicator displays the daily data downloads from your website by Googlebot. By keeping an eye on this number, you can make sure that Googlebot is processing your material effectively and that your website isn’t using too much bandwidth.

- Time Spent Downloading a Page: The average time needed for Googlebot to download a page from your website is captured by this metric. A lengthy average download time could be a sign of server or website performance problems, which would be bad for user experience and search engine rankings.

- Crawl Errors: Monitoring crawl faults is essential for keeping a website in good condition. These mistakes can make it difficult for Googlebot to access and index your material, which will reduce its visibility in search results.

Identifying and Resolving Crawl Errors

To identify crawl errors, navigate to the “Crawl Errors” section of your Google Search Console account. This section lists any errors encountered during the crawling process, categorized as site errors or URL errors.

To resolve crawl errors, follow these steps:

- Investigate the issue: Click on an error in the crawl reports to view more information about the problem, such as the affected URL(s) and the date the error was detected.

- Diagnose the cause: Determine the root cause of the error. Common issues include server connectivity problems, DNS resolution errors, broken links, or misconfigured robots.txt files.

- Fix the issue: Once you’ve identified the cause, take the necessary steps to resolve the problem. This may involve updating your server settings, fixing broken links, or correcting your robots.txt file.

- Verify the fix: After resolving the issue, use the “Mark as Fixed” option in Google Search Console to inform Google that the error has been addressed. Googlebot will then re-crawl the affected URL(s) to confirm that the issue has been resolved.

You can maintain a healthy website, increase the visibility of your site in search results, and guarantee a satisfying user experience for your visitors by routinely checking and correcting crawl problems in your Google Search Console crawl reports.

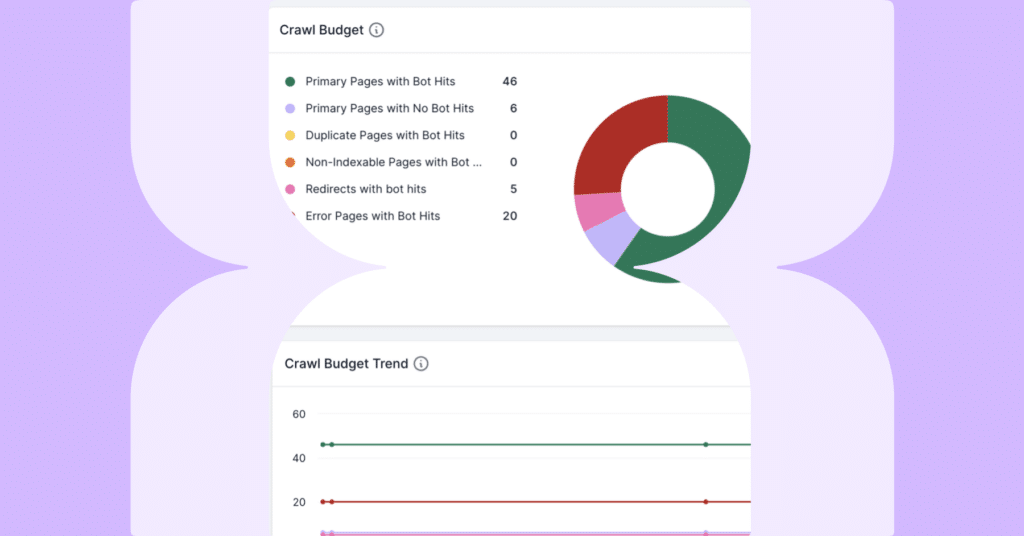

4. Analyzing Crawl Stats for Improved SEO

The Significance of Crawl Stats for SEO

Search engine optimization (SEO) relies heavily on crawl statistics since they show how Googlebot interacts with your website. Crawl statistics will help you pinpoint problem areas and make sure that Google can quickly find and index your website, which will ultimately improve its search engine ranks. Keep track of crawl statistics to:

- Understand Googlebot’s activity on your site and uncover potential issues that may affect indexing.

- Optimize your website’s crawl budget, which refers to the number of pages Googlebot is willing to crawl and index during a specific period.

- Identify trends and patterns in your site’s crawl activity, allowing you to make informed decisions about content updates and website maintenance.

Tips on Optimizing Crawl Budget

By increasing the crawl budget for your website, you can make sure that Googlebot concentrates on the most crucial and pertinent material. Here are some ideas to help you maximize your crawl spending:

- Create a comprehensive XML sitemap: A current XML sitemap submitted to Google Search Console aids Googlebot in more effectively finding and prioritizing the content on your website.

- Fix crawl errors: Keep an eye out for and fix any crawl issues in Google Search Console on a regular basis since they might drain your crawl budget and hinder Googlebot from reaching useful information.

- Optimize site speed: A quick-loading website maximizes the utilization of your crawl budget by enabling Googlebot to crawl more pages in the same amount of time. Consider image optimization, browser caching, and the minification of CSS and JavaScript files to increase site speed.

- Use robots.txt wisely: Set your robots.txt file so that Googlebot won’t crawl low-value or redundant content. This will help to ensure that your crawl budget is spent on unique, high-quality pages.

- Minimize duplicate content: Worse search ranks and wasted crawl budget are two consequences of duplicate material. To identify preferred versions of pages, use canonical tags; do not post the same material under several URLs.

- Update content regularly: By regularly adding new, pertinent material to your website, you can encourage Googlebot to crawl and index it more frequently, increasing your website’s exposure in search results.

You may improve the SEO effectiveness and exposure of your website by looking at crawl statistics in Google Search Console and managing your crawl budget.

5. Sitemaps and Crawl Reports

The Relationship between Sitemaps and Crawl Reports

In order to improve your website’s crawl ability and search engine performance, Google Search Console’s sitemaps and crawl reports work in tandem. A sitemap is a systematic list of every page on your website, often in XML format, that tells search engines how the content on your site is organized and ranked. On the other hand, crawl reports give you information about how Googlebot uses your website, including the pages it crawls, the data it downloads, and any faults it runs across.

The combination of sitemaps and crawl reports enables you to:

- Guide Googlebot to discover and prioritize your site’s most important content.

- Monitor the crawling process and identify any issues that may affect indexing or search engine rankings.

- Make informed decisions about website improvements and content updates based on the data provided in both sitemaps and crawl reports.

Using Sitemaps to Improve Crawlability

Sitemaps may dramatically improve the crawlability of your website, enabling search engines like Google to quickly find and index your content. Here are some pointers for making the most of sitemaps:

- Create a comprehensive XML sitemap: Make sure your XML sitemap appropriately reflects the structure and hierarchy of your website, as well as all the vital pages on it. Update your sitemap frequently to add new pages and delete out-of-date information.

- Submit your sitemap to Google Search Console: Create an XML sitemap and submit it to Google Search Console to provide Googlebot with a map of the material on your website. By doing this, Googlebot can more effectively find and rank your pages.

- Monitor sitemap status and errors: Check the status of your submitted sitemaps in Google Search Console frequently, and take care of any issues or cautions that may be raised. Fixing sitemap problems can make your website easier to crawl.

- Use sitemap data in conjunction with crawl reports: Crawl reports and sitemap data should be combined for analysis in order to spot trends, patterns, and potential indexing and crawling problems. Make informed judgments regarding website changes and content improvements using these findings.

With the combined strength of sitemaps and crawl reports, you can make sure that search engines can quickly find and index your website, which will ultimately enhance search engine ranks and increase the exposure of your content.

6. Fixing Crawl Errors for a Healthy Website

Common Crawl Errors and Their Resolutions

Crawl errors can negatively impact your website’s search engine performance and user experience. Some common crawl errors and their solutions are:

- 404 Not Found: When a page on your website is not there, Googlebot encounters this problem. Restore the missing page, change internal links to refer to the proper URL, or build a 301 redirect from the broken URL to an appropriate existing page to resolve this issue.

- Server Connectivity Errors: Your web server may be having problems, such as timeouts or blocked connections, which are related to these errors. Check your server logs for any problems and work with your hosting company to fix them to fix server connectivity failures.

- DNS Resolution Errors: When Googlebot is unable to resolve the domain name of your website, this error happens. Verify your domain’s DNS settings with your domain registrar and make sure it is correctly pointing to your web server to resolve this problem.

- Blocked Resources: Blocked resources are parts of your website that Googlebot cannot access because of limitations in your robots.txt file, such as photos or scripts. Update your robots.txt file to permit Googlebot access to the resources that are being restricted to address this problem.

- Mobile Usability Errors: When Googlebot notices problems with your website’s mobile version, such as small font sizes or material that isn’t properly optimized for mobile devices, these errors will appear. Use responsive design strategies and adhere to Google’s mobile-friendly guidelines to correct issues with mobile usability.

The Importance of Addressing Crawl Errors in a Timely Manner

For a healthy website to function properly and for the best search engine results, crawl issues must be swiftly fixed. Crawl faults that are promptly fixed:

- Prevents loss of search engine visibility: Crawl mistakes can cause your website’s content to be poorly indexed, which lowers its visibility in search engine results.

- Protects user experience: Broken links or unavailable material on your website may result from unfixed crawl problems, which could have a negative effect on user experience and possibly raise bounce rates.

- Preserves your website’s reputation: Users and search engines are more likely to view a website as trustworthy and reputable if it is well-maintained and has few crawl problems.

- Enhances SEO efforts: By promptly fixing crawl errors, you may prevent technical difficulties from undermining your SEO efforts and ensuring that Googlebot can successfully crawl and index your website’s content.

Your website’s health, search engine performance, and user experience can all be improved by routinely checking for and fixing crawl problems in Google Search Console.

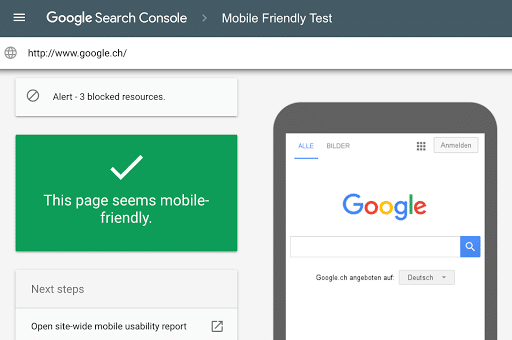

7. Mobile Usability and Crawl Reports

Relevance of Mobile Usability in Crawl Reports

Mobile usability has become a critical component in website performance and search engine results due to the rise in mobile users. The mobile usability section of Google Search Console’s crawl reports exposes problems found by Googlebot while crawling the mobile version of your site. These problems can have a severe effect on the mobile user experience and search engine rankings of your website, so it’s critical to keep an eye on them and take action as soon as possible.

Improving Mobile User Experience Based on Crawl Reports

Crawl reports offer insightful information about problems with mobile usability that you may utilize to improve the mobile user experience on your website. Here are some pointers for maximizing mobile usability using crawl reports:

- Identify mobile usability issues: Check your crawl reports’ mobile usability section frequently to find any issues or warnings that Googlebot has found. Small font sizes, content that isn’t properly optimized for mobile devices, and issues with viewport setup are common challenges.

- Resolve detected issues: Take the appropriate actions to address any issues with mobile usability once you’ve identified them. To make sure that your content scales correctly on mobile devices, this may entail modifying font sizes, putting responsive design strategies into practice, or configuring viewport settings.

- Test your site’s mobile-friendliness: Use Google’s Mobile-Friendly Test tool to assess how well your website works on mobile devices and to get further suggestions for enhancements. You can use this tool to find places for optimization that the crawl reports might not have mentioned.

- Monitor your site’s performance: To evaluate the success of your mobile usability changes, you should frequently monitor the performance metrics of your website, such as page load times and bounce rates. To further improve the mobile user experience, tweak as necessary.

- Stay up-to-date with mobile usability best practices: It’s crucial to keep up with the most recent mobile usability best practices and recommendations as mobile usage continues to develop. Use these strategies while designing and building your website to give mobile consumers a seamless and satisfying experience.

You can give mobile users a better experience, enhance your website’s search engine performance, and ultimately boost your site’s success by using Google Search Console’s crawl reports to find and fix mobile usability problems.

8. Monitoring Security Issues with Crawl Reports

How Crawl Reports Can Help Identify Security Issues

Google Search Console’s crawl reports can assist in identifying security issues that may impact your website’s performance, user experience, and search engine rankings. While crawl reports may not directly indicate specific security vulnerabilities, they can reveal symptoms of security issues, such as:

- Unexpected crawl errors: Sudden spikes in crawl errors, especially for previously accessible pages, may indicate unauthorized changes to your website’s content or server configuration, potentially caused by hacking or malware.

- Unusual server response codes: If Googlebot encounters unexpected server response codes, such as 500 Internal Server Errors, it may be a sign of server misconfigurations or security breaches.

- Blocked resources: If your crawl reports show blocked resources that should be accessible to Googlebot, it may indicate incorrect configurations or unauthorized modifications to your robots.txt file.

Suggestions on Resolving Security-Related Crawl Errors

To address security-related crawl errors, consider the following steps:

- Investigate the issue: Examine your crawl reports for any odd errors or patterns that might point to security problems. Keep an eye out for unusual server response codes, blocked resources, or increases in crawl errors.

- Check server logs and configurations: Check the logs and configurations of your server for any unauthorized modifications or unusual activities. This might assist you in figuring out if your website has been compromised or if any misconfigurations are to blame for the issues.

- Update software and plugins: Make that the software, plugins, and themes on your website are current and safe. Your website may be vulnerable to security breaches and crawl issues if it uses outdated software.

- Implement proper access controls: The admin area and hosting account of your website should be secured using strong passwords, two-factor authentication, and other security measures. This reduces the possibility of crawl faults that could compromise security and unauthorized access.

- Monitor for malware and hacking attempts: Check your website for malware on a regular basis, and utilize security plugins or services to keep an eye out for hacking attempts. To maintain a secure website and reduce the chance of security-related crawl problems, promptly repair any issues found.

- Fix any security issues: Take fast action to address security breaches or vulnerabilities if you find them. In order to fix the problem, can entail removing contaminated files, restoring a backup, or consulting a security expert.

You can maintain a secure and healthy website, ensuring peak performance and a satisfying user experience, by routinely reviewing your crawl reports and taking care of potential security issues.

9. Leveraging Crawl Reports for International Targeting

How Crawl Reports Can Aid in International Targeting

Google Search Console’s crawl reports might offer helpful data that will enhance your website’s attempts to target international audiences. You can: By examining crawl reports:

- Monitor the indexing of your multilingual and multinational content: Crawl reports demonstrate how Googlebot indexes the information on your website, including content aimed toward various languages and geographical areas. You might find potential problems or potential areas for development by keeping an eye on how your international content is being indexed.

- Identify and resolve crawl errors related to international targeting: When hreflang is implemented incorrectly, for example, crawl reports can disclose issues unique to your foreign content that may influence how visible your website is in the intended locales or languages.

- Analyze Googlebot’s crawling behavior for different language versions: You may improve your international targeting approach by being aware of how Googlebot indexes and crawls the various language versions of your website.

Tips on Using Hreflang Tags and Crawl Reports for Improved Global Reach

To leverage crawl reports for enhanced international targeting, consider the following tips:

- Implement hreflang tags correctly: Hreflang tags are crucial for telling Google which languages and regions are targeted by the content on your website. Make sure you adhere to Google’s recommendations and correctly incorporate hreflang tags on all pertinent pages.

- Monitor hreflang errors in crawl reports: Keep an eye out for hreflang-related issues in your crawl reports, such as missing return links or inaccurate language codes. Quickly fixing these issues will increase your website’s exposure in the relevant countries and languages.

- Optimize crawl budget for international content: By adjusting your website’s crawl budget, you can make sure that Googlebot can efficiently crawl and index your international content. To prioritize the crawling of your international material, you might need to update your robots.txt file or create distinct XML sitemaps for each language version.

- Test international targeting with Google’s URL Inspection tool: Test the effectiveness of your foreign targeting for particular Sites using the URL Inspection tool in Google Search Console. This might assist you in finding and fixing problems that might not be clear from the crawl reports.

- Stay informed about international SEO best practices: Ensure that your website’s targeting efforts are successful and comply with Google’s recommendations by staying up to speed with the most recent international SEO best practices and guidelines.

You may improve your website’s international targeting efforts and make sure that your content is seen by the correct people in the desired countries and languages by utilizing crawl reports and effective hreflang implementation.

10. Staying Ahead with Google Search Console Updates

The Importance of Staying Updated with Google Search Console Features

Google Search Console is an essential tool for website owners, providing valuable insights and resources to optimize site performance and search engine rankings. Staying up-to-date with the latest Google Search Console features and updates is crucial for several reasons:

- Maximize the tool’s potential: Google Search Console frequently becomes better and gets more functionality with new features and updates. You can use the tool to optimize your website to the fullest extent by keeping up with these changes.

- Stay compliant with Google’s guidelines: Updates to Google Search Console may bring about changes to Google’s webmaster policies or impose new demands on website owners. Achieving compliance and avoiding potential fines is made easier by staying current with these updates.

- Adapt to evolving SEO landscape: With Google and other search engines often altering their algorithms and ranking variables, the SEO industry is continuously changing. You can modify your SEO approach to conform to the most recent trends and best practices by keeping up with Google Search Console updates.

Recent and Upcoming Updates Related to Crawl Reports

To stay informed about the latest updates and features in Google Search Console, consider the following tips:

- Follow the Google Webmaster Central Blog: Google routinely posts news, updates, and best practices on Google Search Console and other Google technologies on its official blog for webmasters. If you want to be notified whenever a new post is made, subscribe to the blog.

- Participate in SEO communities: Internet communities including forums, social media groups, and business networks can be great resources for up-to-date information on Google Search Console changes. Join these online forums to remain informed and interact with other SEO experts.

- Attend industry events and webinars: Presentations and conversations regarding the most recent trends and improvements in SEO, including Google Search Console, are frequently featured at industry events, conferences, and webinars. You can keep current and get knowledge from industry leaders by attending these events.

- Subscribe to SEO newsletters and blogs: The most recent news and updates in the area of SEO are covered by numerous SEO specialists and firms’ newsletters and blog articles. You can keep up with updates to Google Search Console and other significant SEO tools by subscribing to these resources.

You can make sure your website keeps performing well in search engine results and offers a better user experience for your visitors by staying on top of Google Search Console upgrades.

Conclusion

Crawl reports from Google Search Console are essential for tracking and improving website performance. They give you useful information about how Googlebot crawls and indexes your website, assisting you in locating and fixing crawl mistakes, boosting SEO efforts, enhancing mobile usability, addressing security concerns, and more effectively addressing global audiences.

Website owners may maintain the health, visibility, and usability of their sites by routinely analyzing and acting on the data provided by crawl reports. To modify your SEO approach and keep a competitive edge as the digital landscape continues to change, it’s critical to keep up with Google Search Console features and improvements.

In conclusion, utilizing Google Search Console crawl data to their full potential is essential for improving website performance and providing a top-notch user experience. You may achieve long-term success in the dynamic realm of internet search by being aware and being proactive in addressing problems and enhancing your site.